- myFICO® Forums

- FICO Scoring and Other Credit Topics

- Understanding FICO® Scoring

- Re: Should utilization matter more than debt?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Should utilization matter more than debt?

Is your credit card giving you the perks you want?

Browse credit cards from a variety of issuers to see if there's a better card for you.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Should utilization matter more than debt?

We all know that utilization accounts for almost 1/3 of your FICO score and it seems like utilization is discussed in roughly 1/3 of the threads on this forum. I apologize in advance for adding to that number, but I was thinking today about how utilization with respect to scoring matters far more than balances owed. Does everyone think this makes sense or should be the case? Allow me to illustrate what I mean with an example.

You have two individuals with equal profile data in all areas outside of utilization... payment history, AAoA, inquiries, etc. They also possess equal income levels among other things. One of the individuals has 2 credit cards that he's had for a number of years that have small credit limits as he's never requested CLIs and they were more or less "starter" cards. He's been content with those cards. Conversely, the other individual has 10 credit cards and for a number of years has been hammering CLIs as much as possible, chasing big limits. Now to assign some numbers to this illustration:

Individual 1: 2 credit cards with combined limits of $1500. He owes $700 across the 2 cards or is at 47% utilization. Certainly not ideal for the utilization sector of FICO scoring.

Individual 2: 10 credit cards with combined limits of $185k. He owes $11,000 across all cards, or is at 6% utilization. In terms of the utilization sector of FICO scoring, this person is in the ideal range and could even increase their debt to $16,650 and still be at 9% utilization which still would result in excellent scoring with respect to utilization.

I find it surprising that under FICO scoring, the individual in this case with $700 in revolving debt is in a worse place than the individual with $11,000 in revolving debt, or even up to $16,650. Remember, we are talking two individuals with equal income here... could be $50k. If both individuals were to lose their source of income tomorrow, I'd expect the person with $11k-$16k to have a much more difficult time paying back that debt than the individual with $700 in debt. To me, based on the total of revolving debt, it's pretty clear that the individual that owes significantly more poses a greater risk. However, under FICO scoring with respect to the utilization sector, the individual with two tiny credit cards poses the greater risk and his score will reflect that.

Does anyone other than me have an issue with this? I'm not suggesting utilization shouldn't matter - I think it should... but I also think it would be beneficial and more representative of the real picture if it factored in actual dollars. After all, the utilization chunk of the pie chart that makes up 30% of your score is often referred to as "amounts owed" or "how much you owe" which to me suggests dollars would be considered.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: Should utilization matter more than debt?

Part of why most loans require some kind of manual underwriting.

Utilization can be a double edged sword. Good topic!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: Should utilization matter more than debt?

@Anonymous wrote:We all know that utilization accounts for almost 1/3 of your FICO score and it seems like utilization is discussed in roughly 1/3 of the threads on this forum. I apologize in advance for adding to that number, but I was thinking today about how utilization with respect to scoring matters far more than balances owed. Does everyone think this makes sense or should be the case? Allow me to illustrate what I mean with an example.

You have two individuals with equal profile data in all areas outside of utilization... payment history, AAoA, inquiries, etc. They also possess equal income levels among other things. One of the individuals has 2 credit cards that he's had for a number of years that have small credit limits as he's never requested CLIs and they were more or less "starter" cards. He's been content with those cards. Conversely, the other individual has 10 credit cards and for a number of years has been hammering CLIs as much as possible, chasing big limits. Now to assign some numbers to this illustration:

Individual 1: 2 credit cards with combined limits of $1500. He owes $700 across the 2 cards or is at 47% utilization. Certainly not ideal for the utilization sector of FICO scoring.

Individual 2: 10 credit cards with combined limits of $185k. He owes $11,000 across all cards, or is at 6% utilization. In terms of the utilization sector of FICO scoring, this person is in the ideal range and could even increase their debt to $16,650 and still be at 9% utilization which still would result in excellent scoring with respect to utilization.

I find it surprising that under FICO scoring, the individual in this case with $700 in revolving debt is in a worse place than the individual with $11,000 in revolving debt, or even up to $16,650. Remember, we are talking two individuals with equal income here... could be $50k. If both individuals were to lose their source of income tomorrow, I'd expect the person with $11k-$16k to have a much more difficult time paying back that debt than the individual with $700 in debt. To me, based on the total of revolving debt, it's pretty clear that the individual that owes significantly more poses a greater risk. However, under FICO scoring with respect to the utilization sector, the individual with two tiny credit cards poses the greater risk and his score will reflect that.

Does anyone other than me have an issue with this? I'm not suggesting utilization shouldn't matter - I think it should... but I also think it would be beneficial and more representative of the real picture if it factored in actual dollars. After all, the utilization chunk of the pie chart that makes up 30% of your score is often referred to as "amounts owed" or "how much you owe" which to me suggests dollars would be considered.

The problem with this BBS, is amount of debt is important in relation to income, and will be considered in some lending decisions. In a fico score however, DTI is not considered at all. Some genius determined that it would be discriminatory to consider income in the scoring model. That is why the utilization is so easily manipulated. Suppose two people with equal 50k incomes both have 2000 dollars of credit card debt on only 1 of 5 cards. Suppose 1 of them has actively asked for CLI's and the other never has asked for a CLI. Now suppose the one who has is at 100k in total CL, and the other is at 10K total CL. Keeping in mind all income and debts are equal for these 2 people...why would the person who just never bothered to get CLI's be any greater risk of defaulting on this debt. They would not be, yet the fico score which is suppose to be risk predictive will definately give a much lower score to the individual that is at 20% utilization vs 2% utilization. It is why we all get these outrageous credit limits we will not be using. The risk models are flawed. It is all a game, they make the rules, we just need to learn how to play their game to win.

EX fico08=815 06/16/24

EQ fico09=809 06/16/24

EX fico09=799 06/16/24

EQ fico bankcard08=838 06/16/24

TU Fico Bankcard 08=847 06/16/24

EQ NG1 fico=802 04/17/21

EQ Resilience index score=58 03/09/21

Unknown score from EX=784 used by Cap1 07/10/20

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: Should utilization matter more than debt?

@BBS...Just the dollar figure also would not work as a predictor, because 25k in debt is a lot if your annual income is 22k, but is no big deal if your annual income is 500k. The dollar amount of revolving debt could only be indicative of credit troubles if it is compared to a persons income or assets.

EX fico08=815 06/16/24

EQ fico09=809 06/16/24

EX fico09=799 06/16/24

EQ fico bankcard08=838 06/16/24

TU Fico Bankcard 08=847 06/16/24

EQ NG1 fico=802 04/17/21

EQ Resilience index score=58 03/09/21

Unknown score from EX=784 used by Cap1 07/10/20

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: Should utilization matter more than debt?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: Should utilization matter more than debt?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: Should utilization matter more than debt?

@sarge12 wrote:@Anonymous...Just the dollar figure also would not work as a predictor, because 25k in debt is a lot if your annual income is 22k, but is no big deal if your annual income is 500k. The dollar amount of revolving debt could only be indicative of credit troubles if it is compared to a persons income or assets.

Right, dollar amount alone wouldn't mean much... but relative to income it certainly would.

I'm glad I'm not the only one that views scoring and utilization as flawed with respect to this topic.

I think another work around with respect to income, that is eliminating income from the equation but still considering dollars of debt would be to consider debt verses payments. Using my original example of a guy with $700 in revolving debt at 47% aggregate utilization verses a guy with $11,000 in revolving debt at 6% aggregate utilization: What if the guy with $700 in debt is paying down a total of $350/mo while only spending $100/mo across all (2) accounts while the guy with $11,000 in debt is also paying down $350/mo but is spending $1500/mo? Taking those numbers into consideration, the second guy is putting himself further into debt (a greater risk factor) while the first guy with higher utilization has a formula to be debt free in 3 months.

Not sure exactly how it could be figured, but something like purchases divided by payments. If the number is less than 1, the individual isn't accumulating more debt... not considering interest, of course. Probably something like .5 would be a more "comfortable" score. Something like a .1 or less could be the top scoring bracket which would be like someone making $200 in purchases put paying down balances $2000. Just sort of thinking out loud here...

I guess that sort of gets away from my original idea of considering total revolver debt, but it could be a useful piece of data nonetheless.

Getting back to original total revolving debt, the equation could perhaps involve total revolving debt divided by total payments. Using my previous numbers again, $700 in total debt for the first guy divided by his $350 in payments = 2. The second guy with $11,000 divided by $350 in payments = 31. This would mean that it would take the first guy about 2 months to pay off his total debt and the second guy about 2.5 years. Again, this doesn't account for any interest. I think a number such as this would be useful in considering risk as anyone that carries a debt that may take them 2.5 years to pay off certainly represents greater risk than someone that is likely to pay it off in 2 months... all other things being equal. I do like that considering these numbers doesn't involve income at all, so it can't be viewed as discriminantory.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: Should utilization matter more than debt?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: Should utilization matter more than debt?

@Anonymous wrote:

I actually do think that income should be left out of scoring. Maybe the higher earner simply doesn't want to pay off their debt and plans on leaving for Mexico burning the banks in the process. Income is not reliable enough to be of any use in regards to risk...would they then not also have to consider assets. Example you have 2 people with equal credit profiles both make 50k. Person A has no savings what so ever, but person B has a 500k trust fund. Who is more likely to make payments. Just to many unknowns.

^ Agree 100%. Credit scores are defined as being a numerical representation of credit worthiness, not wealth. If I were a lender I would look at DTI and income in addition to score to assess capacity to pay back a loan and likelihood follow thru on obligations. Wealth does not equate to a commitment to follow through.

Ultimately what goes into credit scoring and relative weighing of factors is best left up to the statisticians who develop models based probability using "big data". I would agree that revolving utilization, as currently being represented is highly flawed. A better predictor might be actual monthly expenditures (not statement balances) divided by credit limit - with the provision for revolver/transactor behavior as a factor modifier.

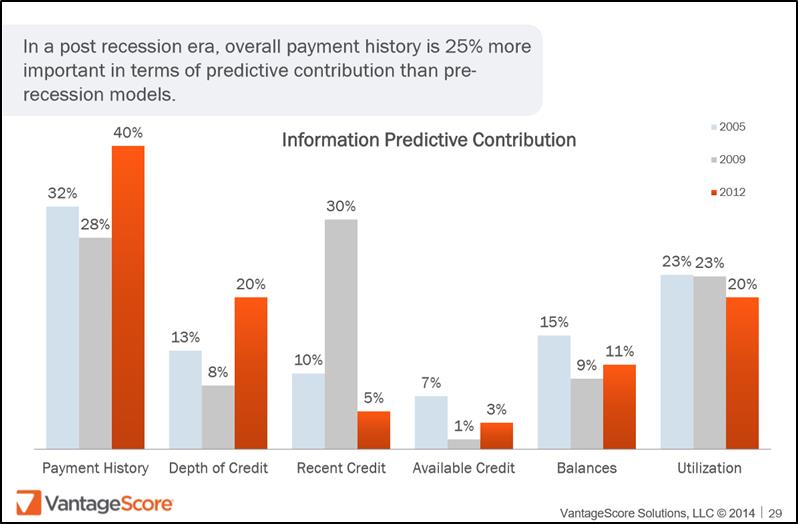

I pasted the below analysis from VantageScore in another thread but, it is a better fit here. It's maximizing the predictive ability of the model relative to population sub groups that sells a given model to lenders.

Fico 8: .......EQ 850 TU 850 EX 850

Fico 4 .....:. EQ 809 TU 823 EX 830 EX Fico 98: 842

Fico 8 BC:. EQ 892 TU 900 EX 900

Fico 8 AU:. EQ 887 TU 897 EX 899

Fico 4 BC:. EQ 826 TU 858, EX Fico 98 BC: 870

Fico 4 AU:. EQ 831 TU 872, EX Fico 98 AU: 861

VS 3.0:...... EQ 835 TU 835 EX 835

CBIS: ........EQ LN Auto 940 EQ LN Home 870 TU Auto 902 TU Home 950

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: Should utilization matter more than debt?

@Anonymous wrote:

A hypothetical length of time required to pay off a bal is an interesting approach. After all as the length of time increases so does the probably of some unforeseen event such as job loss. Of course so does the chance of winning the lottery, lol.

True, but I think the chances of one losing their job greatly exceeds the chances of winning the lottery. Isn't there some crazy statistic on a the percentage of lottery winners that end up broke? I'd like to sneak a peek at their FICO 08's ![]()